Just few days ago (April 16, 2013), I was so lucky because I attended at the most important talk in this month (IMHO) even though that could be just missed by having another lunch appointment with some other people. The talk was from Professor Marc Levoy, about the 'Future of Photography' and his personal preferences on the 'Google Glass'. This post will be a summary of remarks given by that talk, where the remark points were solely judged based on my point of view.

Just few days ago (April 16, 2013), I was so lucky because I attended at the most important talk in this month (IMHO) even though that could be just missed by having another lunch appointment with some other people. The talk was from Professor Marc Levoy, about the 'Future of Photography' and his personal preferences on the 'Google Glass'. This post will be a summary of remarks given by that talk, where the remark points were solely judged based on my point of view.First of all, I should mention some background history of this talk. Professor Marc Levoy is well-known to people in Computer Graphics, Multimedia, HCI, Photography, and people who love disruptive technologies like Lytro Camera, Google Street View, and SynthCam iPhone application. To me, all his series of R&D projects are something very 'disruptive', since I couldn't even imagine such technologies can be used in the real-world. Definitely, he must be a kind of men with much enough power to develop ideas to the real products. This could be the reason why Google made an offer to him for their Google Glass project for 2 years (starting in 2011). Note that, he's now officially on a leave from Stanford, but working with Google.

AFAIK, this is the first keynote speech from him after he's been with Google. He gave several interesting talks with several remark points. Here is the summary:

- Google Glass' (current) hardware specifications

- Google Glass' characteristics and differences than the other camera devices

- Yet another, almost-feasible image processing techs. for mobile, handheld devices

- Future work for a 'Superhero Vision' that people's eyes can't

(Note that, this is based on my notepad memories, so please feel free to write your feedback if something is wrong.)

1. Google Glass' (current) hardware specifications: (nothing much than we have expected)

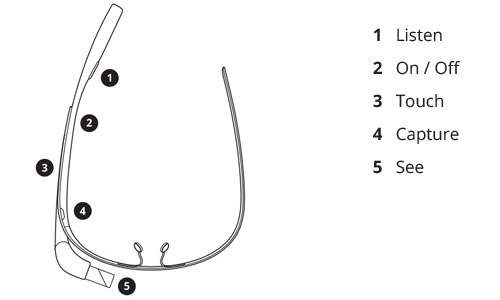

[Image from Google: https://www.google.com/glass/help/#getting-to-know-glass]

- Camera

- Smartphone-level camera (5~8MP, without Flash)

- Lens

- Wide angle (I guess 24~28mm focal length)

- Display

- Heads-up display (or, Eyes-up display - since you need to look a little bit high to see)

- Screen

- Marc Levoy said it is a good resolution (but I think it would be 640x360 or lower, since no need for higher resolution)

- Sensor

- Accelerometer, Gyroscope, Compass, GPS, etc.

- Communication

- 3G, 4G, WiFi, Bluetooth (probably 4.0 BLE?)

- Other

- Physical button(s) and Touch Sensitive Input Device, etc.

- Hands-free

- Voice command: "OK Glass, Take a Shot!"

- Point-of-view

- Always the same (or, similar) as eye's point of view

- Always Available

- Because we're wearing it

(but except for restrooms and some pubs where Google Glass is banned)

- Wide Field of View

- Wide-angle lens with panorama shot feature

- No Viewfinder

- Pros-and-cons (it depends on who you ask, but it's more frictionless)

- Life-logging

- Always, anywhere capturing and recalling

- Social Network Integration

- Integrated with Google Hangout and Google+

(note that, Professor Marc Levoy's team in Stanford University already implemented and released an iPhone application called SynthCam, and it provides basic functionalities even though the features are not so easy to use.)

- Background Out-focusing like SLR Cameras [Example] (SynthCam support)

- Image Noise Reduction using Multiple Images [Example Before, After] (SynthCam support)

- Object Removal using Multiple Images [Example Before, After] (SynthCam support)

- Miniature-model Photography [Example] (SynthCam support)

- Multi-points Focusing for Noise Reduction (SynthCam support)

- Gyroscope-based Human 2D/3D Shake Reduction

- Cinemagraphs [Examples] (Combination between Photo and Video)

- 4D Light Field, etc.

[An example of Cinemagraph - Image from: http://cinemagraphs.com/]

- Seeing in the Dark

- New visions to see vividly in the darkness

- Low-light Imaging

- Yet another computational method to extend ISO-level

by compositing multiple images in real-time

- Super Resolution

- Using multiple images to increase resolution (it is known to be inefficient more than 2.2x)

- Language Translator for all the world

- See Word Lens for example

Editorial Comment >

I believe that the future of imaging devices will help blind people.

If I just mimic some blind people, the hardest one is to know whether the floor is flat or not, and whether it is a right way to go. It will be super helpful if there is a device which tells the direction (with proper position on the road) and the shape of any kinds of floors. But, I also believe that companies will not make a lot of money based on this kind of device, since the market is so small, and the blind people are relatively poor than usual people. In addition, they can't even see any news or website for their devices. So, I think it is the right time to urge our governments to raise funds for such a people. Let's make some change!

- written by ANTICONFIDENTIAL, at SF, in April 19, 2013

Great Article

ReplyDeleteDeep Learning Final Year Projects for CSE

Project Centers in Chennai